The seventh tension

Recap of AIxED panel on Real Educators Learning AI in Real Time

I was on a panel last Saturday at AIxED, an AI in education conference in Boston. The panel was called Real Educators Learning AI in Real Time, and the framing came from Sideby: how educators are figuring out how to use AI in their actual work, not what AI can do. Kippy Smith moderated. The other panelists were Yousuf Marvi, an 8th-grade math teacher in Irvine Unified, currently in his first year teaching English Language Development, and Anne Fensie, who directs the Center for Teaching and Learning at the University of Maine at Presque Isle and works mostly with adult learners in a competency-based program.

Kippy opened by asking each of us for our why for using AI. Anne named learning itself. She wants AI to help where help is appropriate and not to flatten the productive struggle that builds the skill. I named educator agency, with a debt to Punya Mishra: no technology was originally designed for education, and educators have always had to adapt what was built for somebody else. AI’s offer is that it puts educators in the designer’s seat for once. Yousuf named his students. The multimodality of current AI means he no longer has to rely on text alone to understand what a novice language learner is doing in geometry. Kippy named the pattern: none of us led with personal efficiency.

The conversation kept circling back to friction. Yousuf put it most cleanly with an effort-to-output ratio. When the ratio is close to one, learning is happening. When it drops too low, learning collapses. Anne quoted Daniel Willingham (memory is the residue of thought) and offered a triumph and a disaster from her own practice. The triumph: she learned enough Python through AI assistance to scrape 30,000 email addresses for her dissertation survey, something that had previously been out of reach. The disaster: she followed AI’s instructions during a database update and overwrote two-thirds of her course content with no backup, then spent ten hours of a conference manually restoring it from change history. The disaster made her more critical of every output. I argued that real learning shows up in AI’s failures, where students see why human expertise matters, and that my work with faculty is mostly about preserving the friction that AI is engineered to remove.

Two audience contributions reshaped the room. Stephen, who had spent the prior week at an agentic AI corporate event, pushed back on schools designing learning from the inside out, starting with learning instead of with outcomes. His line: curriculum should be designed to uncover content, not cover it. Jenna, an AI product manager who sits on a school council where her district is barely engaging with AI, asked the practical question: how do you actually start. Anne’s answer was small daily reps. Mine was to stop believing the hype that everyone else is ahead, and to start with real low-stakes problems. (Mine: a tool that reads my refrigerator and suggests dinners that work for both my husband’s allergies and my weight goals.) Yousuf’s was the sharpest reframe of the panel. If you cannot make the case for AI in your classroom without using the words ChatGPT or Gemini, the work is on the AI literacy side, not on the resistant-teacher side.

The closing frame the room landed on came from another audience contribution: the problem of practice. AI literacy without a real problem to solve is technology for its own sake. With a problem of practice in hand, AI becomes a possible role in a real solution, not the solution itself.

I built a companion site where the tensions we worked through, the framings we offered, and the pieces we didn’t get to say on stage all live. The workbook has six tensions in it.

There is a seventh I couldn’t get in there in time. It is the one I am still sitting with, and the one I want to give more room than a prompt.

It is agentic AI.

I should start by saying what I love about it, because the version of this conversation where I open with my worries doesn’t sound like me, and it isn’t true to my experience.

I love that agentic AI puts non-programmers in the design seat. I have built a handful of tools in the last year, some for productivity, some for reflective practice. The fact that I could build them at all is the new thing. That access matters. It is also, in a roundabout way, what pushed me toward pursuing more formal programming background. Not because the agents made me feel inadequate, but because they made me feel adjacent to a thing I wanted to understand from the inside.

The design seat is not the driver’s seat

The first reason is the one Kishore Aradhya named at AIxED, and I keep returning to it. Agents are confident when they are wrong. They mislabel. They miscategorize. They invent metadata that looks right because it conforms to a pattern, not because it is true. Non-programmers are less equipped to catch when something looks right but isn’t. I am fortunate to work at a school of computing, where colleagues have been patient and generous in helping me debug when things go off the rails. Not everyone has that safety net.

The second reason is execution. Agentic tools run commands on your machine. The “approve this action” prompt can become theater if you do not fully understand what you are authorizing. Prompt injection is a real concern. I have worked through tutorials with three or more layers of redirects, where the first two were benign and the later ones raised yellow and red flags about where and how my data was being committed. Some folks in our IT department are excited about what I have been building, and they have also been clear that real vetting takes time before any of it touches sensitive data. I think that pacing is right.

The third reason is privacy. Pointing an agent at your folders means handing it whatever lives in them: tax documents, draft manuscripts, FERPA-protected student data, IRB materials. Even with training opt-outs, file contents travel to company servers. Most universities have not issued guidance that keeps pace with the tools, and faculty are largely navigating this on their own.

None of those are dealbreakers. They are problems with solutions, mostly involving time, policy, and someone to call. The harder question is the pedagogy.

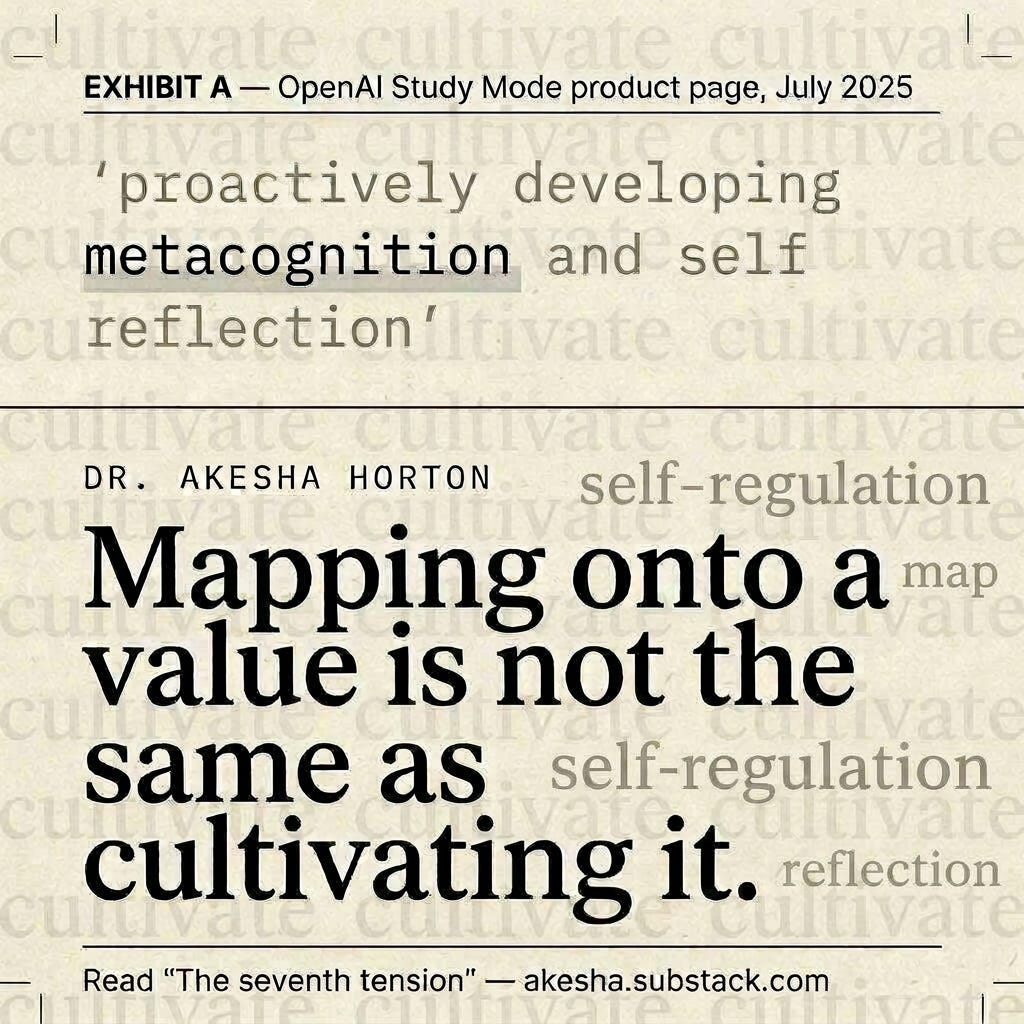

Mapping onto a value is not the same as cultivating it

Here is where my concern actually lives. Agentic AI is increasingly being pitched as scaffolding for the cognitive moves we want students to develop themselves: metacognition, self-regulation, inquiry. The marketing language is not subtle.

When OpenAI launched ChatGPT Study Mode last July, the product page described its behaviors as “proactively developing metacognition and self reflection, fostering curiosity, and providing actionable and supportive feedback.” Read that again. The agent develops metacognition. The agent fosters curiosity. The agent provides the feedback. The student is the object of the verbs, not the subject.

Anthropic’s Claude for Education Learning Mode, launched a few months earlier, frames the agent as guiding “students’ reasoning process rather than providing answers,” prompting them with questions like “How would you approach this problem?” and “What evidence supports your conclusion?” The framing is more careful, but the agent is still the one asking the metacognitive questions. The student is responding to them.

Ethan and Lilach Mollick’s widely cited paper on classroom AI prompts puts the design pattern most plainly: “AI Language Models can help students engage in metacognition as an AI coach by helping direct students to engage in a metacognitive process and articulate their thoughts about a past event or plan for the future” (Mollick & Mollick, 2023). A peer-reviewed framework published last year in Frontiers in Education calls AI “a metacognitive partner” and reports that “AI quantitative feedback improves the accuracy of metacognitive monitoring.”

The bundling shows up most cleanly in a September 2025 EDUCAUSE Review essay co-authored by, among others, Paul LeBlanc and George Siemens. They describe the new student skill profile as developing “metacognition (thinking about their thinking) and meta-emotional skills (managing their emotions), as well as the ability to design and refine AI agents.” Metacognition, emotional regulation, and AI-agent design get folded into the same sentence, as if learning to manage your own mind and learning to manage a tool were the same kind of skill.

I want to name the move all five of those examples are making, because once you see it, you cannot unsee it. The cognitive work we used to ask the student to do (pause, notice, reflect, ask the next question) is being relocated to a piece of software that does that work adjacent to the student. The student watches the metacognition happen. The student answers prompts about it. The student gets feedback on it. What the student does not do, in this design, is the part that was the skill in the first place.

Mapping onto a value is not the same as cultivating it. Sometimes the closest match is the thing most likely to displace what we hoped to grow.

Four patterns worth naming

The same elision shows up across the field in patterns I think are worth naming out loud, so we can argue with them as patterns instead of arguing with each product one at a time.

The reflective scaffold pattern is the most common. The agent prompts the student to notice their thinking. What’s one thing you’d do differently? Where did you get stuck? My worry is not that those prompts are bad. It is that the noticing was the skill we were trying to build. If the prompt arrives on time, every time, calibrated to the right level, the student never has to develop the internal cue that says this is the moment to stop and check. The scaffolding never comes down because the scaffold became the skill.

The persistent project partner pattern proposes that an agent that remembers your work across sessions is building your follow-through. The pitch is that the agent holds the through-line so you can re-enter the work. Persistence, though, is something a person does, not something an agent provides on a person’s behalf. An agent that remembers your contradictions for you may not be building your persistence so much as building its own continuity while yours quietly atrophies.

The role-player pattern asks the agent to simulate a journal editor, a skeptical colleague, a peer reviewer. The assumption is that an agent with goals and constraints meaningfully approximates the human in that role. That is a sizable assumption. Real editors and colleagues bring institutional context, professional stakes, and accountability. An agent can simulate the shape of those interactions without the weight. The piece I worry about most: students may learn what an editorial exchange looks like without ever building the judgment, the resilience, or the read on power dynamics that real editorial exchanges develop in a person.

There is a fourth pattern that does not have the same problem, and I want to give it real space. The design-the-agent pattern has students making agents instead of using them. Constraining them. Arguing with their behavior. That move treats AI as something to be made legible and contestable rather than simply consumed. The cognitive work stays on the student because the student is the designer.

The burden of proof

None of this is an argument against experimenting. Marc Watkins put a version of this in the Chronicle last fall: higher education, he wrote, “must explore ways of curbing the use of agentic AI tools to automate work that students are supposed to do on their own.” Punya Mishra asked the question more compactly in February: how do we build agents that work with students, not for them?

That is the question I want to ask out loud, alongside both of them. The burden of proof sits with us to show that agentic designs build the agency they claim to support, rather than simulate it convincingly enough that we stop asking.

The six tensions in the workbook are there because they did not resolve on stage and they probably will not resolve for a while. The seventh is here for the same reason. If any of this is sitting in you the way it is sitting in me, the site is where to keep going with it.